There’s a quiet shift happening in robotics right now—quiet enough that most people haven’t noticed it yet, but profound enough that, in a few years, we may look back and realize it changed everything.

For decades, robots were defined by one simple limitation: they only did exactly what we told them to do.

Every movement, every action, every decision was pre-programmed. Engineers wrote rules, defined boundaries, and carefully controlled environments so machines wouldn’t fail. If something unexpected happened—a misplaced object, a change in lighting, a slightly different angle—the robot would often freeze or break down entirely.

That model worked well in factories. It worked in structured environments where repetition and predictability were guaranteed.

But the real world doesn’t operate like a factory.

And now, for the first time, robots are beginning to adapt to that reality.

They are learning.

From Hard-Code to Intelligence

To understand how big this shift really is, you have to understand how traditional robots worked.

Imagine a robotic arm on an assembly line. Its job is to pick up a component and place it in a precise location. The position is fixed. The timing is fixed. The motion path is fixed.

Engineers program every detail.

If you move the object even slightly out of position, the robot might miss it entirely.

That’s because the robot doesn’t “see” the object the way humans do. It doesn’t understand context. It doesn’t reason.

It follows instructions.

This approach dominated robotics for over 50 years.

But over the past decade, a new paradigm has emerged—driven largely by breakthroughs in artificial intelligence.

Instead of telling robots exactly what to do, engineers are now teaching them how to figure things out on their own.

And that changes everything.

The Rise of Learning Machines

At the heart of this transformation is machine learning, particularly deep learning.

Rather than relying on explicit programming, modern robots are trained on massive datasets. They learn patterns, relationships, and behaviors by analyzing millions—or sometimes billions—of examples.

This approach mirrors how humans learn.

A child isn’t given a list of instructions on how to recognize a chair. They see thousands of chairs over time, and their brain gradually builds an understanding of what a chair is.

Now, machines are doing something similar.

Research from institutions like Massachusetts Institute of Technology and Stanford University has pushed the boundaries of what these systems can achieve. Robots can now recognize objects, interpret scenes, and even predict outcomes based on past experiences.

But there’s a crucial difference between recognizing something and interacting with it.

That’s where robotics becomes truly challenging.

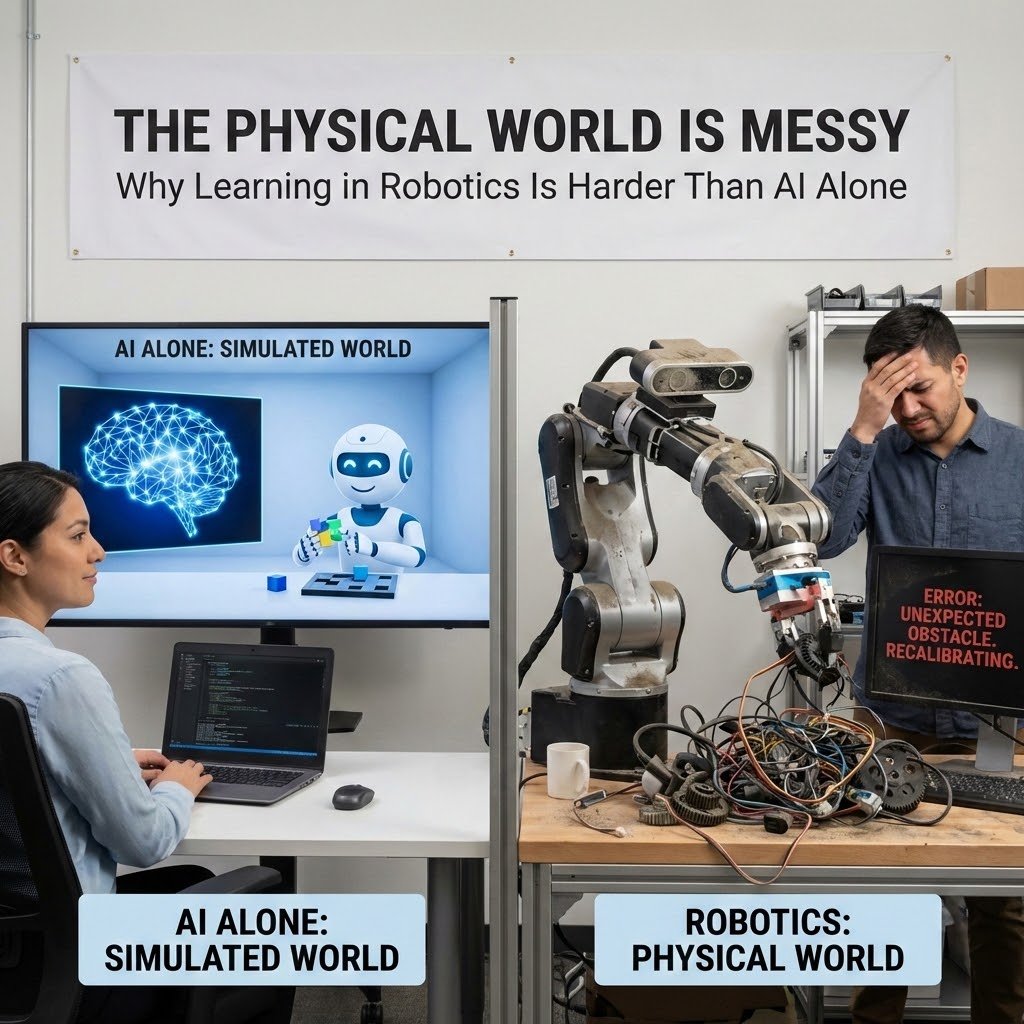

Why Learning in Robotics Is Harder Than AI Alone

If you’ve been following AI developments, you might think: haven’t machines already learned to do incredible things?

After all, AI can now generate text, create images, and even write code.

But robotics introduces a layer of complexity that pure software doesn’t face.

When a robot learns something, it has to apply that knowledge in the physical world.

And the physical world is messy.

Objects have weight. Surfaces have friction. Sensors have noise. Environments change constantly.

A robot doesn’t just need to think—it needs to act.

And every action carries risk.

If an AI model misclassifies an image, nothing breaks.

If a robot misjudges a movement, it might drop an object, damage equipment, or injure someone.

That’s why robotics learning has progressed more slowly than other forms of AI.

But that gap is closing.

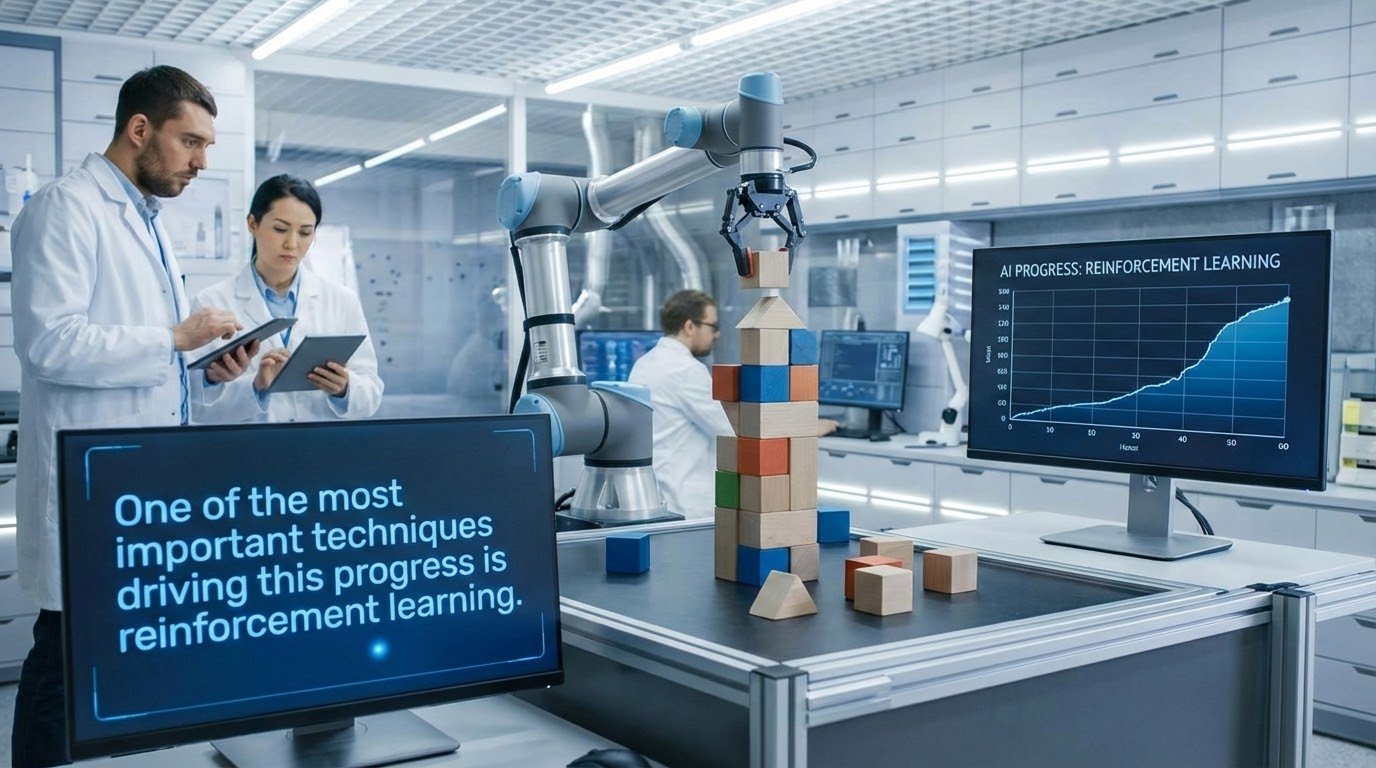

The Role of Reinforcement Learning

One of the most important techniques driving this progress is reinforcement learning.

In simple terms, reinforcement learning allows robots to learn through trial and error.

The robot attempts a task. If it succeeds, it receives a reward. If it fails, it adjusts its behavior and tries again.

Over time, it improves.

This approach has been used to train robots to walk, grasp objects, and navigate complex environments.

But in the real world, trial and error can be expensive.

You can’t have a robot dropping thousands of objects or crashing into walls while it learns.

That’s where simulation comes in.

Training Robots in Virtual Worlds

One of the most fascinating developments in robotics today is the use of simulated environments to train machines.

Instead of learning in the physical world, robots can practice inside highly detailed virtual environments.

These simulations replicate physics, lighting, and object interactions with remarkable accuracy.

Companies like NVIDIA have become central to this approach. Their platforms allow researchers to create digital environments where robots can train at scale.

Inside these simulations, robots can perform millions of experiments in a fraction of the time it would take in reality.

They can fail repeatedly without causing damage.

They can explore scenarios that would be dangerous or impractical in the real world.

Once the robot learns a task in simulation, that knowledge can be transferred to a physical machine.

This process, known as sim-to-real transfer, is one of the key reasons robotics is advancing so quickly today.

NVIDIA and the New Robotics Stack

To understand how important this shift is, you have to look at the role of computing hardware.

Training AI models requires enormous computational power.

Running those models in real time inside a robot requires even more.

This is where companies like NVIDIA have positioned themselves at the center of the robotics revolution.

Their GPUs are used to train deep learning models. Their AI chips power real-time inference inside robotic systems. Their simulation platforms enable large-scale training.

In many ways, NVIDIA has become the infrastructure layer for modern robotics.

Without this level of computing power, the idea of robots learning autonomously would remain largely theoretical.

Robots That Learn to Grasp, Not Just Move

One of the most difficult challenges in robotics has always been manipulation.

Picking up objects sounds simple—until you try to teach a machine how to do it.

Every object is different.

A cardboard box behaves differently from a glass cup. A soft cloth behaves differently from a rigid tool.

Traditional robots struggled with this variability because they relied on predefined rules.

Modern robots, however, are learning to grasp objects through experience.

They analyze shape, weight, and texture. They adjust their grip dynamically. They learn from previous attempts.

Research labs at Carnegie Mellon University have demonstrated systems where robots improve their grasping ability over time, becoming more reliable with each attempt.

This may sound like a small improvement, but it’s actually a major breakthrough.

Because once a robot can reliably manipulate objects, its range of possible tasks expands dramatically.

Real-World Examples of Learning Robots

We’re already beginning to see early examples of these capabilities in real-world systems.

Companies like Boston Dynamics are developing robots that combine mobility, perception, and learning to operate in complex environments.

Their machines can navigate uneven terrain, avoid obstacles, and perform tasks that would have been impossible just a decade ago.

Meanwhile, startups such as Figure AI are working on humanoid robots designed to learn tasks in industrial settings.

These robots aren’t just executing pre-programmed actions.

They are adapting.

They are improving.

They are learning.

The Economic Implications

As robots become more capable, their economic impact grows.

Automation has always been about efficiency.

But learning robots introduce a new dimension: flexibility.

A traditional robot might perform one task extremely well.

A learning robot can perform multiple tasks—and improve over time.

This flexibility makes robotics more attractive across a wider range of industries.

According to data from the International Federation of Robotics, global robot installations continue to rise, with significant growth in sectors like logistics, healthcare, and agriculture.

As AI-powered robots become more adaptable, they are likely to expand into even more areas.

The Road Ahead: General-Purpose Robots

One of the most exciting ideas in robotics today is the concept of general-purpose robots.

Instead of building machines designed for a single task, engineers are working toward robots that can learn a wide range of skills.

This approach mirrors the evolution of computers.

Early computers were specialized.

Modern computers are versatile.

Robots may follow the same trajectory.

A single machine could eventually clean your home, assist with daily tasks, and adapt to new situations through software updates.

We’re not there yet.

But for the first time, the path toward that future is becoming visible.

So What Does This Mean for the Future?

If you step back and look at the bigger picture, one thing becomes clear.

The most important shift in robotics today isn’t mechanical.

It’s cognitive.

Robots are transitioning from tools that execute instructions to systems that learn from experience.

And once machines can learn, their potential expands exponentially.

We may still be years away from fully autonomous household robots.

But the foundation is being built right now.

In research labs.

In simulation environments.

In warehouses and factories.

The robots of the future won’t just follow commands.

They will understand context.

They will adapt to change.

And perhaps most importantly, they will continue improving long after they leave the factory.

So, what should you take away from all this?

The next time you see a robot performing a simple task, remember this:

What looks simple on the surface may represent years of research, millions of training iterations, and a fundamental shift in how machines interact with the world.

We are no longer just building robots.

We are teaching them.

And that distinction may define the next era of technology.

References

MIT Technology Review — https://www.technologyreview.com

Stanford AI Lab — https://ai.stanford.edu

NVIDIA Robotics — https://www.nvidia.com/en-us/industries/robotics/

International Federation of Robotics — https://ifr.org

Thomas Huynh – Admin of RoboZone.top